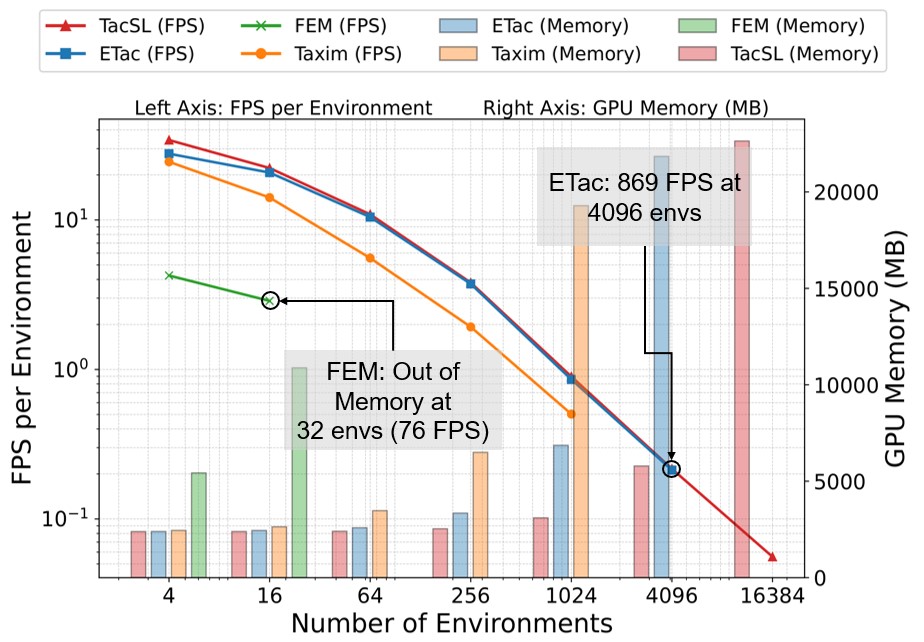

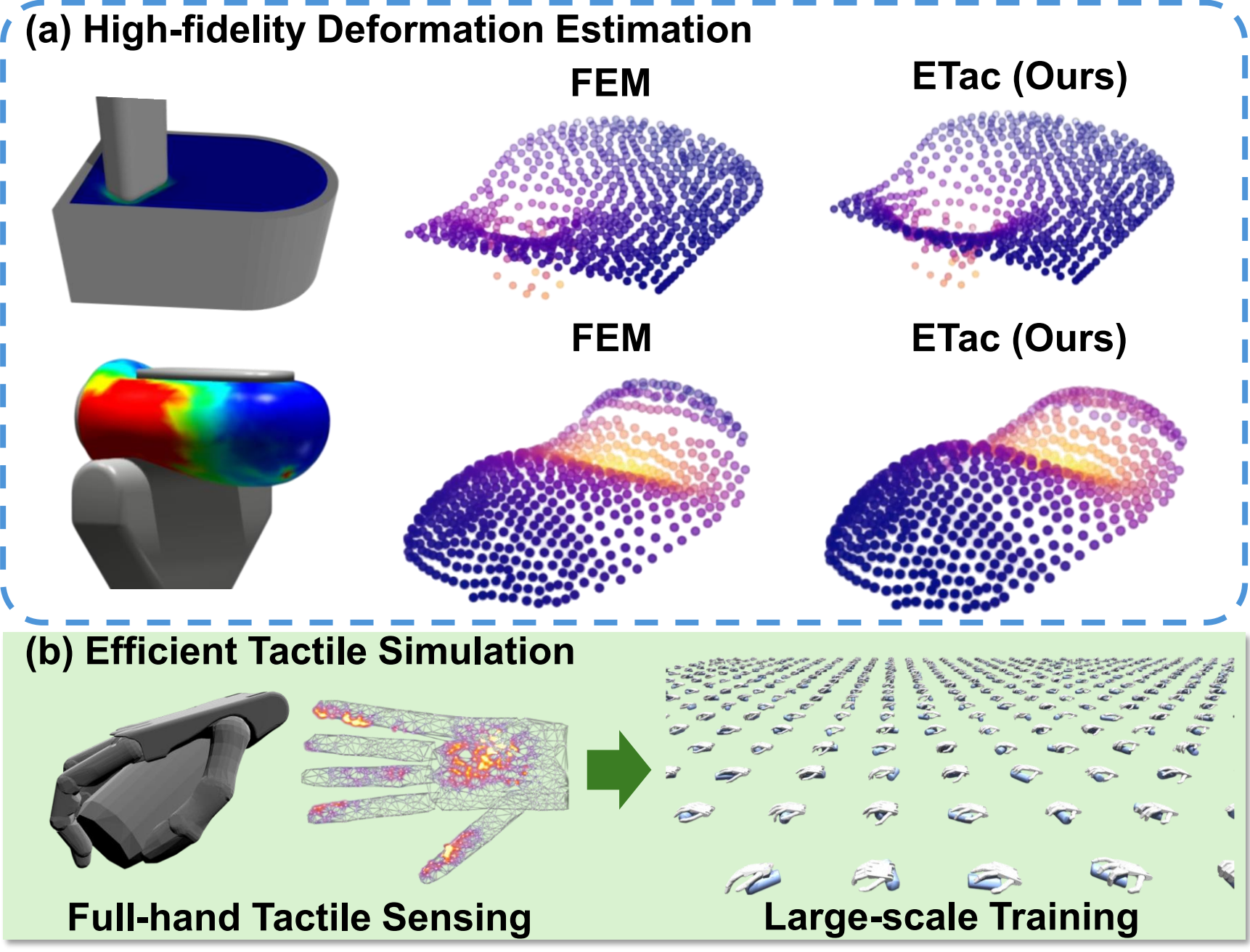

Tactile sensors are increasingly integrated into dexterous robotic manipulators to enhance contact perception. However, learning manipulation policies that rely on tactile sensing remains challenging, primarily due to the trade-off between fidelity and computational cost of soft-body simulations. To address this, we present ETac, a tactile simulation framework that models elastomeric soft-body interactions with both high fidelity and efficiency. ETac distills knowledge from high-fidelity yet computationally expensive finite element simulations to a lightweight deformation propagation model, achieving simulation quality while enabling large-scale policy training. When serving as a soft-body simulation backend, ETac produces surface deformation estimates comparable to FEM and demonstrates applicability for creating digital twin of tactile sensors. Then, we showcase its capability in training a blind grasping policy that leverages large-area tactile feedback to manipulate diverse objects. Running on a single RTX 4090 GPU, ETac supports reinforcement learning across 4,096 parallel environments, achieving a total throughput of 869 FPS. The resulting policy reaches an average success rate of 84.45\% across four object types, underscoring ETac's potential to make tactile-based skill learning both efficient and scalable.

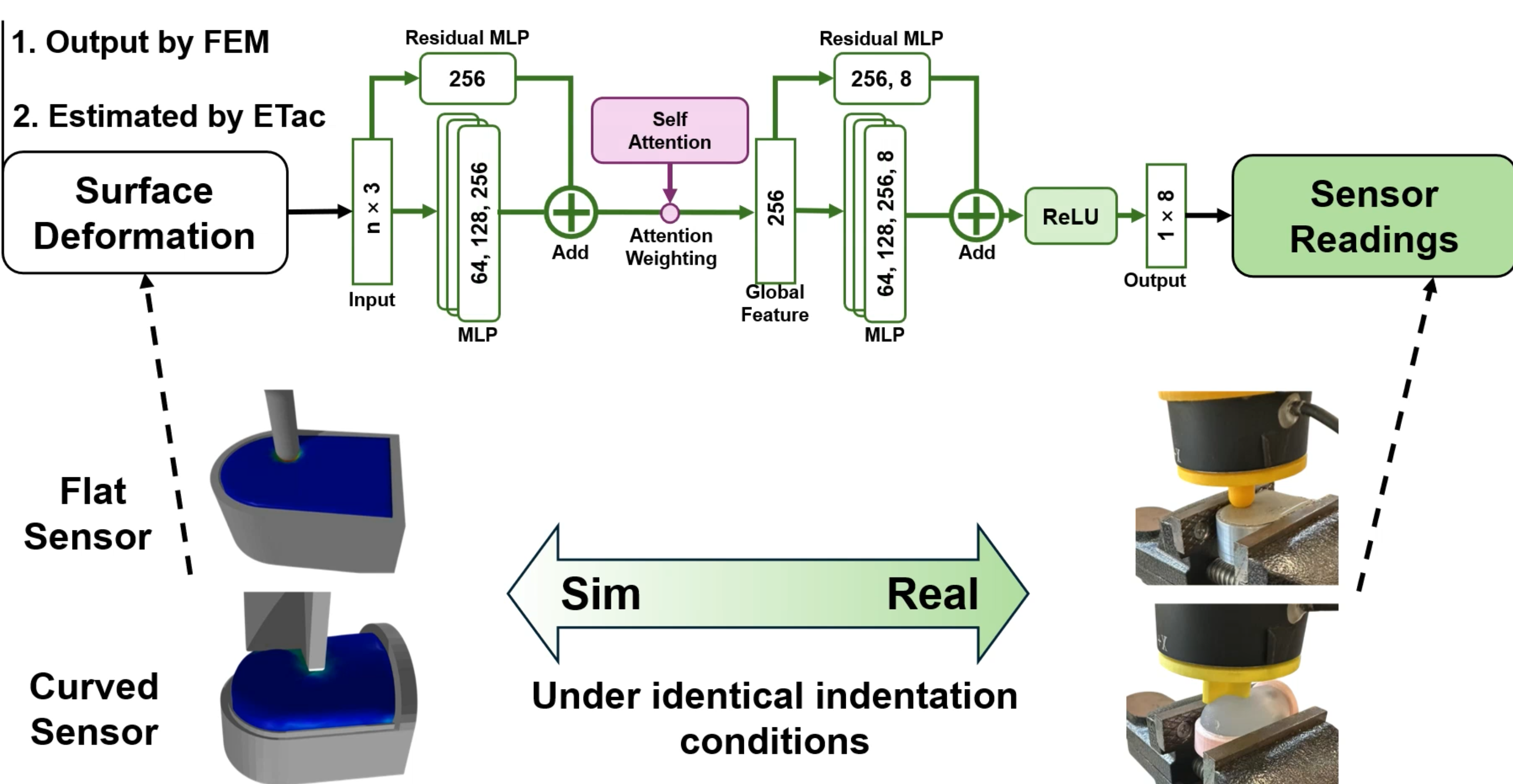

We equipped tactile sensors (one with flat elastomer layer and another curved) in real and simulated settings, recording real sensor readings and surface deformations from ETac and FEM. A PointNet model was trained to map displacement fields to sensor responses.